|

| |

| Jörg Denzinger's

Research |

Engineering self-organizing, self-adapting emergent multi-agent systems

From its beginnings, a major goal of the area multi-agent systems (then still called distributed artificial intelligence) was to harness the power of a group of cooperating agents to solve tasks better, which could mean faster, with higher success rates, or resulting in better solutions. Achieving synergy, i.e. an improvement larger than the number of used agents (a rough definition), was the holy grail of a lot of research (see our teamwork and TECHS projects). A key for achieving synergy in our systems was the ability of them to organize themselves and to adapt to the particular instance of a task they were supposed to solve.

Since then, self-organization and self-adaptation have been recognized as key properties of multi-agent systems that achieve emergent properties, i.e. properties that the MAS has, but that none of the individual agents have (again, a rough definition). Synergy has to be considered an emergent property, if the synergetic improvement makes the difference between solving a task or not. There are many self-organizing and/or self-adapting systems in nature and many of those can be considered multi-agent systems. And many of those systems in nature have been used as inspiration for the engineering of self-organizing, self-adapting emergent multi-agent computer systems.

Our approach evolves around the concept of digital infochemicals, which moves the concept of infochemicals (which is the superclass of pheromones and allelochemicals, well-known in biology) into the computer world. The general idea is to have agents leave a certain amount of an infochemical in the (digital) environment, where other agents can observe them and can use the information contained in them for their own decision purposes. There are many different types of infochemicals that can be used to structure the information in the environment and to structure the interactions between the agents. We presented a design pattern for systems using digital infochemicals in our EASe 2009 paper (see the bibliography page).

One of the key problems around getting self-organizing, self-adapting systems really used (in addition to testing them) is the efficiency of the produced solutions. The targeted properties of such systems, like local decision making, scalability, robustness, flexibility and adaptability to the environment are usually needed for applications that additionally have as core problems a dynamic nature, a low observability and a poor controllability, which makes achieving efficient solutions very difficult and hence challenging.

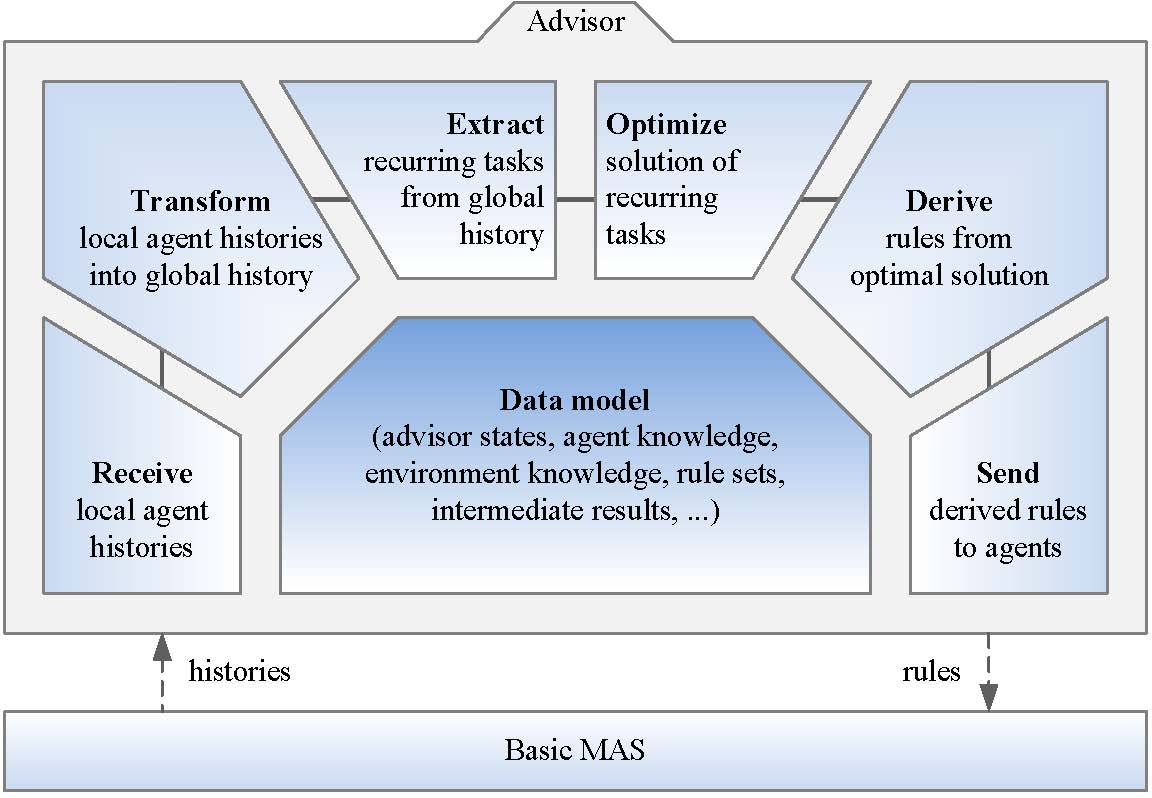

To deal with this problem, we developed the concept of an

advisor, an additional agent team

member that collects information about the problem instances a

self-organizing emergent system solves over time, identifies

inefficient behavior of the system and creates advice for some

of the agents aimed at avoiding the inefficient behavior in the

future. The following picture shows the sequence of steps the

basic advisor version goes through in order to improve efficiency:

It should be noted that a key assumption for the usage of an advisor is that the problem instances that the system solves contain quite a number of recurring tasks that the advisor is able to identify and to use as basis for its advice. For the advice itself, there are many possible types of it (see our SOAR 2010 paper). We suggest to represent advice as exception rules for the agent we want to help to be more efficient. So far, we have evaluated two rather different types of advice:

- ignore rules and

- pro-active rules.

For more on our ideas around self-organizing, self-adapting emergent multi-agent systems, please refer to the papers cited on our bibliography page. A list of the persons that are or were involved in this research can be found here.

|

back to our page on multi-agent systems. |

Last Change: 5/12/2013